Chaining is a generic method of generating maximal inequalities on the suprema of random processes.

Suppose we have a collection of random variables indexed by some set which we assume is a centered (zero-mean) sub-Gaussian process in the sense that

for some metric . The ultimate goal is to bound but this is done via bounding

If is mean-zero, then for any so we often focus on the quantity .

Overview

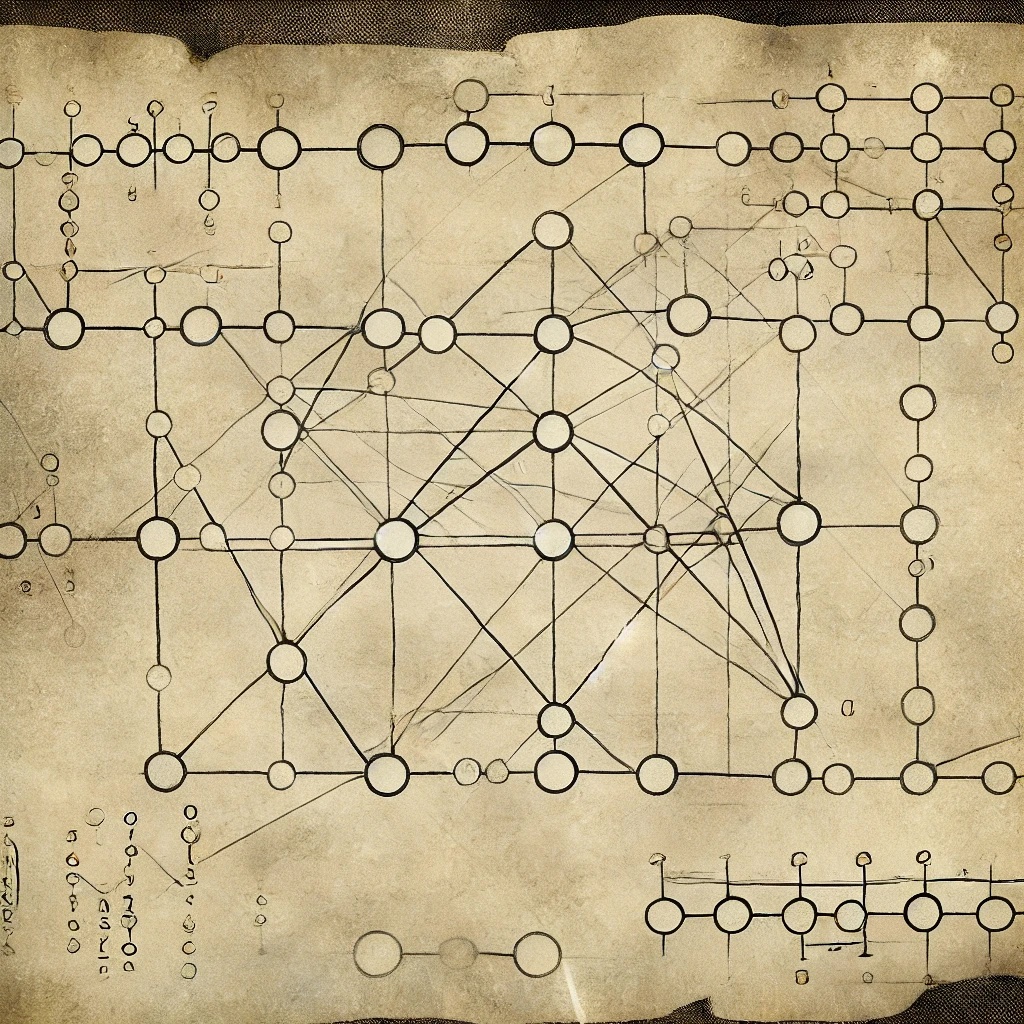

The idea is to decompose as

where and for all large enough (so that the sum is really a finite sum). This is the “chain”. Here is a map from where

So is the approximation of within . We enforce that is finite, so that union bound type arguments will apply. In particular we set , which looks ridiculous I know, but we will want exponential growth in log-space. We let be the closest point to in , so that

The intuition is the following:

- Bound using that is a sub-Gaussian process.

- Union bound over all elements of (both are finite).

- Union bound over all (again finite).

- Use the chaining decomposition above to provide a bound on .

Chapter 2.2 in Talagrand’s book below provides the details. We end up with a bound of the form:

where

where we’ve used the triangle inequality . This gives .

There are two main ways of dealing with . One is generic chaining, and one is Dudley chaining. The latter is looser than the former, but sometimes easier to compute.

References

- Upper and lower bounds for stochastic processes, Talagrand.